When you install an HPE server with the VMware custom image for HPE servers you automatically get all the HPE tools for configuring the hardware. Neat.

Here is a small guide on how to clean and setup the array. The ssacli is located in /opt/smartstorageadmin/ssacli/bin on the ESXi serve – start here and follow the next commands

Show the existing config:

[root@esx2:/opt/smartstorageadmin/ssacli/bin] ./ssacli ctrl slot=0 ld all show

Smart Array P440ar in Slot 0 (Embedded)

Array A

logicaldrive 1 (279.37 GB, RAID 1, Failed)

Array B

logicaldrive 2 (3.27 TB, RAID 1+0, OK)Delete the existing logical drives:

[root@esx2:/opt/smartstorageadmin/ssacli/bin] ./ssacli ctrl slot=0 ld 1 delete

Warning: Deleting an array can cause other array letters to become renamed.

E.g. Deleting array A from arrays A,B,C will result in two remaining

arrays A,B ... not B,C

Warning: Deleting the specified device(s) will result in data being lost.

Continue? (y/n) y

[root@esx2:/opt/smartstorageadmin/ssacli/bin] ./ssacli ctrl slot=0 ld 2 delete

Warning: Deleting the specified device(s) will result in data being lost.

Continue? (y/n) y

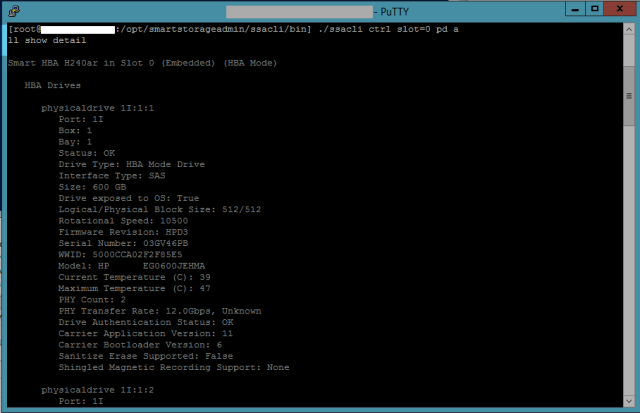

[root@esx2:/opt/smartstorageadmin/ssacli/bin] Show all physical drives in the server:

[root@esx2:/opt/smartstorageadmin/ssacli/bin] ./ssacli ctrl slot=0 pd all show

Smart Array P440ar in Slot 0 (Embedded)

Array A

physicaldrive 1I:1:9 (port 1I:box 1:bay 9, SAS HDD, 900 GB, OK)

physicaldrive 1I:1:10 (port 1I:box 1:bay 10, SAS HDD, 900 GB, OK)

physicaldrive 1I:1:11 (port 1I:box 1:bay 11, SAS HDD, 900 GB, OK)

physicaldrive 1I:1:12 (port 1I:box 1:bay 12, SAS HDD, 900 GB, OK)

physicaldrive 1I:1:13 (port 1I:box 1:bay 13, SAS HDD, 900 GB, OK)

physicaldrive 1I:1:14 (port 1I:box 1:bay 14, SAS HDD, 900 GB, OK)

physicaldrive 1I:1:15 (port 1I:box 1:bay 15, SAS HDD, 900 GB, OK)

physicaldrive 1I:1:16 (port 1I:box 1:bay 16, SAS HDD, 900 GB, OK)Create a new volume with available physical drives:

[root@esx2:/opt/smartstorageadmin/ssacli/bin] ./ssacli ctrl slot=0 create type=ld drives=1I:1:9,1I:1:10,1I:1:11,1I:1:12,1I:1:13,1I:1:14,1I:1:15,1I:1:16 raid=6

Warning: Controller cache is disabled. Enabling logical drive cache will not take effect until this has been resolved.

[root@esx2:/opt/smartstorageadmin/ssacli/bin] Conclusion

Nice and easy – Give the HPE ssacli manual a read for more commands, starting page 57 and forward or use ssacli help.

[root@esx2:/opt/smartstorageadmin/ssacli/bin] ./ssacli help

CLI Syntax

A typical SSACLI command line consists of three parts: a target device,

a command, and a parameter with values if necessary. Using angle brackets to

denote a required variable and plain brackets to denote an optional

variable, the structure of a typical SSACLI command line is as follows:

<target> <command> [parameter=value]

<target> is of format:

[controller all|slot=#|serialnumber=#]

[array all|<id>]

...........